Today I did a 5 minute rapid fire talk at the RSS 2018 International Conference hosted in Cardiff City Hall. My talk was in RF1 session, held at 10am in the council chamber. Other talks in the session included:

- Using Spatio-temporal clustering to model antibiotic use. Jonathan Ansell - The University of Edinburgh Business School, United Kingdom.

- Predictive Modelling: What’s the customer’s propensity to pay?. Vasiliki Bampi - Dwr Cymru Welsh Water.

- On the sample mean after a group sequential trial. Ben Berckmoes - Universiteit Antwerpen, Belgium.

- Detecting signals for adverse drug reactions (ADRs) using non- constant hazard in longitudinal data: a comparison of three model-based methods. Victoria Cornelius - Imperial College London, United Kingdom.

- A new gamma-generalised gamma mixture cure fraction model: Application to ovarian cancer at the University College Hospital, Ibadan, Nigeria. Serifat Folorunso - Department of Statistics, University of Ibadan, Nigeria.

Mapping the urban forest

I have put my slides here for anyone interested in our urban forest project.

Introduction

In the data science campus, we have been researching computer vision based approaches to automatically detect and map street-trees and vegetation in urban environments.

Trees provide a wide range of social, economic and environmental benefits. According to a recent ONS study , the UK’s trees removed nearly 1.5 billion kilograms of air pollution in a single year, resulting in an annual benefit of £1 billion. For Cardiff, the annual benefit is close to £8 million.

In cities, cars are a major source of pollution. As such, this work focuses on detecting trees around the urban road network.

Aims

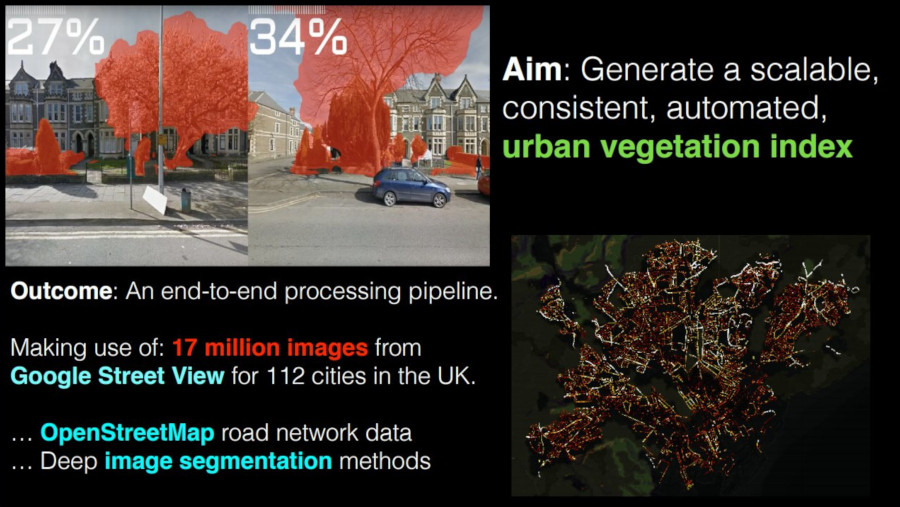

The main aim of this project is to produce an urban vegetation index in a scalable and consistent way.

By scalable, I mean that it should be possible to deploy the approach across the UK, and by consistent, I mean that the approach should be reproducible and comparable with existing methods.

To achieve this, we have made use of ~17 million Google street view images, focussing on the 112 major towns and cities as defined by ONS Geography.

Using data from OpenStreetMap, we calculate 10 metre intervals along the entire urban road network and then we take the corresponding street view images for each point and generate a percent vegetation score.

Initial approaches

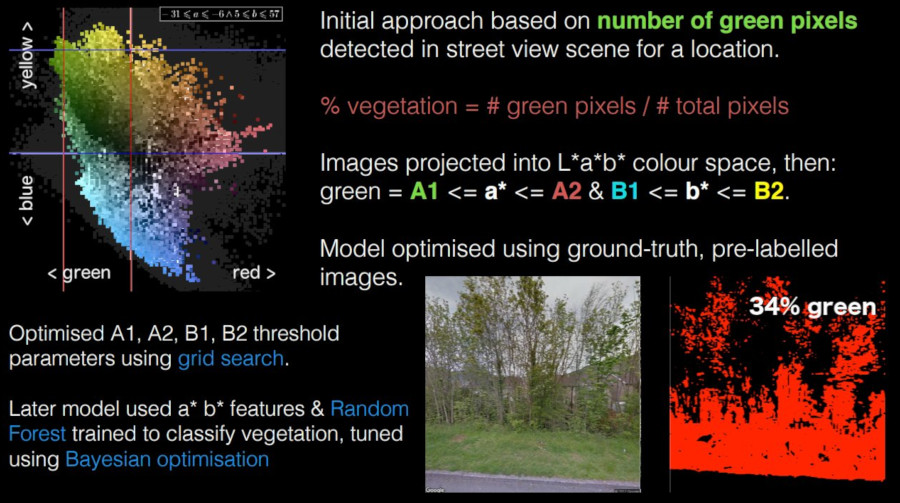

Our initial approach was based on the idea that the amount of green visible in the scene could be used as a proxy measure for vegetation.

Specifically, we defined a basic metric based on the ratio of detected green pixels in an arbitrary street-level image.

To do this, we project the image into the linearly separable L*a*b* colour space and then label pixels as green if the pixel (a*, b*) values are within the range of a thresholding function.

Here, we see a visualisation of the L*a*b* colour distribution for all pixels belonging to the vegetation class in our ground-truth data (We have made use of the excellent Mapillary Vistas Dataset in this project). Our initial model will label a pixel as vegetation if it falls within the region delimited by the (A1, A2) and (B1, B2) parameters.

We later implemented a non-linear thresholding function, focusing on the elliptic region of green distribution (inside the square threshold). In both cases, we performed hyper-parameter optimisation using grid-based and Bayesian parameter search.

Current approach

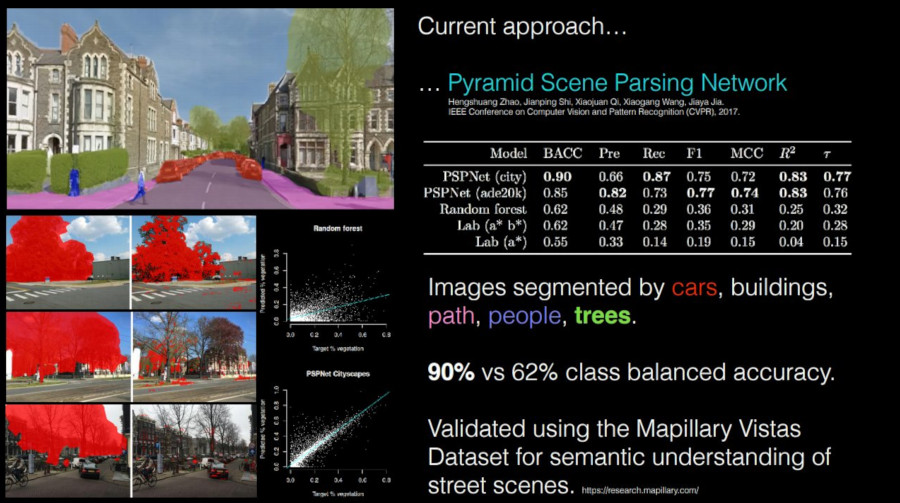

Of course, the assumption that green corresponds to vegetation has a number of issues. Specifically, our initial model was not robust to the seasonality of trees, tree species, and of course to the presence of green cars etc. As such, we were only able to achieve a class balanced accuracy of 62% with respect to our ground-truth validation data.

Our current prototype makes use of a deep neural network architecture: A Pyramid Scene Parsing Network (PSPNet), which has been demonstrated to be highly effective at segmenting street-level imagery into various components such as cars, buildings, people and trees.

By using this model, we are able to detect vegetation with 90% accuracy in a way which is robust to the issues mentioned before.

Above, (left), we can see vegetation classified by the PSPNet vs our initial approach (right). Notice that the new PSPNet is able to detect leafless trees.

Summary

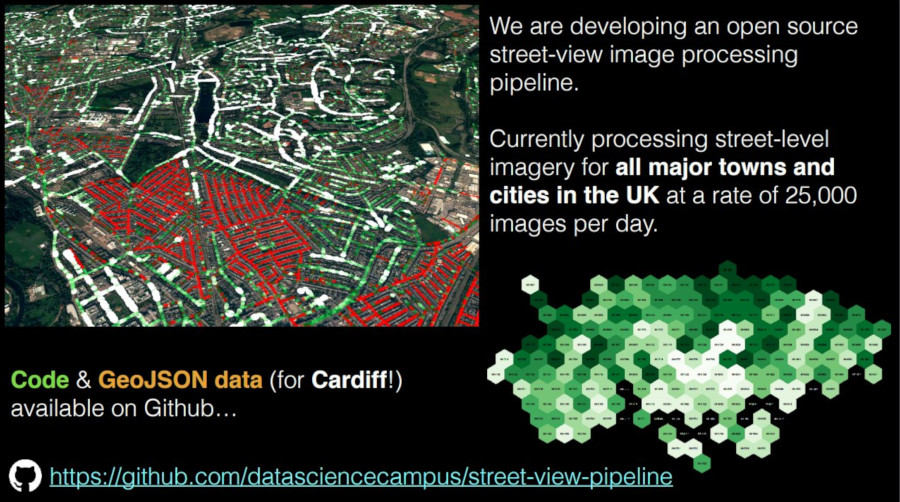

The main result of this work has been the development of an open-source, end-to -end image processing pipeline which we are currently using to sample street-view images at a (capped) rate of 25,000 images per day.

We currently have partial data for England and Wales and complete data for Cardiff, Newport and Manchester.

All of our work is available on Github. We will be publishing a technical paper within the next couple of weeks.

Thanks for reading.

Code and data

- openstreetmap-network-sampling - Generate equidistant points along urban area road network.

- street-view-pipeline - Distributed Google street view image processing pipeline. Inc. data.