As part of the Synthetic Data project at the Data Science Campus we investigated some existing data synthesis techniques and explored if they could be used to create large scale synthetic data. In this brief blog, we explore one of the family of algorithms used as a baseline in the work. These techniques are usually used to balance datasets for classification. We look at how they work, and how and when they can be used. We also show how they can be a quick and effective way to synthesis data from a given distribution.

Addressing the imbalance

A dataset is imbalanced if the classification categories are approximately equally represented. Many real-world datasets are imbalanced, comprising of predominantly ‘normal’ examples with only a small percentage of ‘abnormal’ examples. It is these underrepresnted minority classes that are often of most interest, e.g., detecting instances such as fraud or rare cancers. Machine learning algorithms have issues classifying correctly when one class dominates the other. They can learn to label all inputs as the majority class and still achieve high accuracy scores.

Measuring the minority

The evaluation of algorithm performance using predictive accuracy alone in the case of imbalanced datasets is not appropriate. Often applications require a high rate of detection of the minority class and allows a small error rate in the majority class. Instead of using the traditional accuracy scoring parameter, the outputs are evaluated using a confusion matrix. The precision (how many true positives were predicted out of all the predicted positives - good to understand if the cost of false positives is high) and recall (how many true positives predicted out of the total number of actual positives - the cost of false negatives). The balance of these two is often measured using the F1 Score.

The Receiver Operating Characteristic (ROC) curve is also a standard technique for summarising classification performance. It evaluates the trade-off between true positive (TP) and false positive (FP) error rates. The Area Under the Curve (RUC) and ROC convex hull are traditional performance metrics derived from a ROC curve.

There are a number of approaches to addressing class imbalance and increase sensitivity to the minority class:

- synthesis of new minority class instances

- over-sampling of minority class

- under-sampling of majority class

- combination of under- and over-sampling

- adjust the cost function to make misclassification of the minority instances more important that misclassification of majority instances

In this summary, we will focus on the first approach, looking at some well used techniques to create data to boost the number of minority classes.

Techniques for minority synthesis

Synthetic Minority Over Sampling (SMOTE) synthesises new minority instances between existing (real) minority instances. It creates new synthetic instances according to the neighbourhood of each example of the minority class. It is a widespread solution used in imbalanced classification problems and it is available in several commercial and open source software packages.

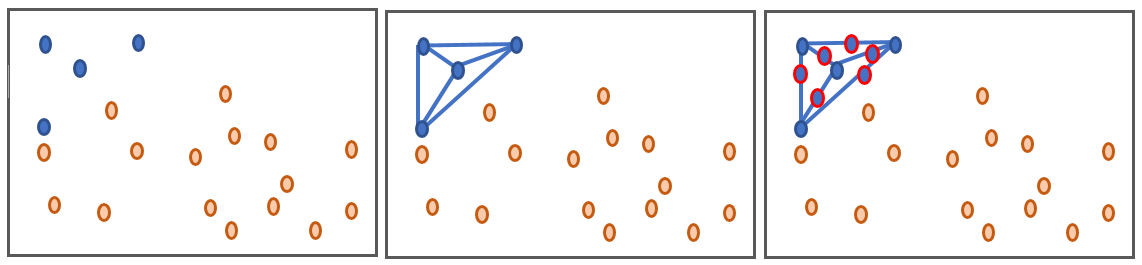

The technique was proposed in 2002 by Chawla, Bowyer, Hall, & Kegelmeyer, and is now an established method with over 85 extensions of the basic method reported in specialised literature Fernandez et al. A way to visualise how the basic concept work is to imagine drawing a line between two existing minority data points. SMOTE then creates new synthetic instances somewhere on these lines.

In the above example, we start with an imbalance of 4 blue vs 16 orange instances. After synthesising it is now 10 blue vs 16 orange instances, with the blue instances dominating within the data ranges typical for theses minority samples. This is a key aspect of the technique –over-sampling with replacement focussed data in very specific regions in the decision region for the minority class. It does not replicate data in the general region of the minority instances, but on the exact locations. As such it can cause models to overfit to the data. In SMOTE synthetic data is used rather than replacement.

The minority classes are oversampled by taking each minority class sample and introducing synthetic examples along the segments joining any/all of the k minority class nearest neighbours. This forces the decision region of the minority class to be more general, leading to better generalising decision trees. Considering a sample xi, a new sample xnew will be generated considering its k nearest neighbours. The steps are

- take the difference between the feature vector sample xi under consideration and its nearest neighbour xzi

- multiple the difference by a random number γ between 0 and 1 and add this to the sample vector to generate a new sample xnew

xnew=xi+γ(xzi-xi)

A variation of this approach is Adaptive Synthetic (ADASYN) sampling method. This operates in a similar way as regular SMOTE, except the number of samples generated for each xi is proportional to the number of samples which are not from the same class of xi in a given neighbourhood. SMOTE will connect inliers and outliers in the data, while ADASYN can focus solely on outliers. This can sometimes lead to suboptimal decision functions. To help address this SMOTE has different implementation options to generate samples - hence the many different extensions to the regular SMOTE.

Variations on a theme

The ADASYN and the SMOTE variants differ in the way they select the samples xi ahead of generating new ones. A summary of how some of the different variations differ is outlined below:

| Sampling method | Choice of neighbour |

|---|---|

| SMOTE regular | Randomly pick from all possible xi |

| SMOTE SVM | Uses an SVM classifier to find support vectors and generate samples using them. |

| ADASYN | Similar to regular SMOTE, except the number of samples generated for each xi is proportional to the number of samples which are not from the same class that xi in a given neighbourhood. |

| SMOTE borderline1, SMOTE borderline2 | Classifies each sample xi to be 1) noise (all nearest neighbours from a different class), 2) danger (at least half the nearest neighbours are from same class) or 3) safe (all nearest neighbours are of the same class). Borderline SMOTE operates only on the danger samples. For Borderline1, xzi will belong to a class different from that of xi For Borderline2, xzi will belong to any class |

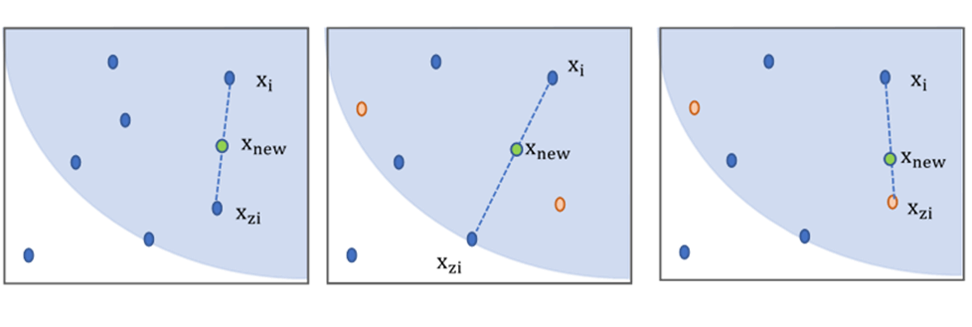

This can be illustrated visually in the following diagram that shows examples of regular SMOTE (left), Borderline 1 (middle) and Borderline 2 (right) for k=6 (the neighest k neighbours are shown in the light blue area. The minority class samples are shown in blue.

The difference is how the xi value is selected before finding xzi and generating x_new. For regular SMOTE xi is randomly selected from all minority class examples. For borderline, we only pick samples that are classed as ‘in danger’ – i.e., at least half the nearest neighbours are from the opposite class. For borderline 1, xzi is from a different class to xi, for borderline 2 it can be from any class. If we were using ADASYN on our right-hand example, we would generate n samples, where n is proportional to 3 (the number of samples not from the same class as xi). Other extensions to the technique focus on variations such as the choice of initial samples xi, combining with undersamping, restricting the interplotion method when choosing the position of xnew and noise filtering.

Imbalanced datasets can be made altered for use in machine learning by using SMOTE and ADASYN variations. Some may be more appropriate for some datasets compared to others. Disjoint minority class instances, noisy data, class overlapping, big data sources and streaming data can all affect the suitability of different techniques, and a number of different extensions may need to be applied to find the best variation for your problem.

Application for general synthetic data generation

Synthetic data is data manufactured artificially rather than obtained by direct measurement. Government organisations, businesses, academia, members of the public and other decision-making bodies require access to a wide variety of administrative and survey data to make informed and accurate decisions. However, the collecting bodies are often unable to share rich microdata without risking breaking legal and ethical confidentiality and consent requirements.

This can often hinder the efforts of the data science community in providing more detailed and timely analysis to decision-makers to effectively tackle major challenges such as climate change, economic deprivation and global health. There has been much recent interest and research into the generation of synthetic data, and as part of a recent Data Science Campus project we compared the use of SMOTE with more recent techniques such as auto-encoders and generative adversarial networks (GANs).

The general methodology was as follows:

- take an existing dataset with n entries, make imbalanced (by replicating the dataset and assigning a target class) or generate a dataset with different features and an imbalance of 2:1 (this results in a generated dataset with the same number of samples at the original)

- Run SMOTE (different variants) to generate new data samples (n new samples)

- remove new samples from new dataset

- compare against original dataset – perform a correlation matrix on all the original data, all the generated data and then subtract the two correlation matrices; the resulting correlation matrix should be all zero for well-matched generated data

- use the Bhattacharyya distance to measure the similarity between the original and generated probability distributions, along with the Pearson R correlation.

This was implemented in Python using the imbalanced-learn. A number of open datasets were used as testbeds to generate data, a summary is shown in the following section.

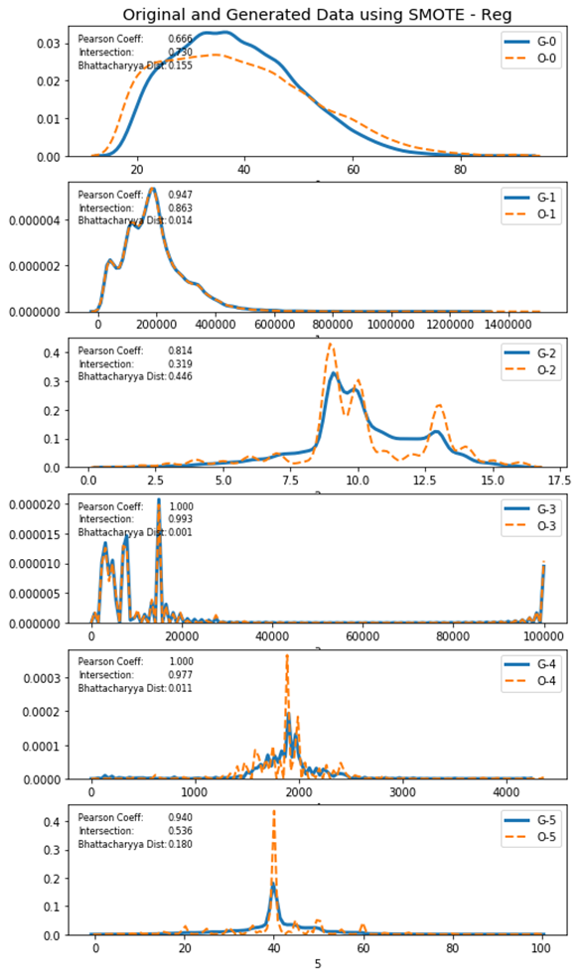

Example generated data - US adult census data

A numbe rof different datasets were used, but here we will focus on the adult census data data set from the UCI machine learning repository. This is a multivariate open data set derived from the US 1994 Census database, often used to predict whether income exceeds $50k/yr. The six continuous numeric features were used as the original data distributions. The original and generated data for this more data set is shown below for the regular SMOTE method. The output was similar for the four SMOTE variations used. The original data is designated with Ox and generated data with Gx. For some features the generated data matches the original closely but has a tendency to smooth and generalise the data if it is particularly noisy and can distort some asymmetrical distributions. We see this reflected in the quality measures, particularly the intersect and Bhattacharyya distance.

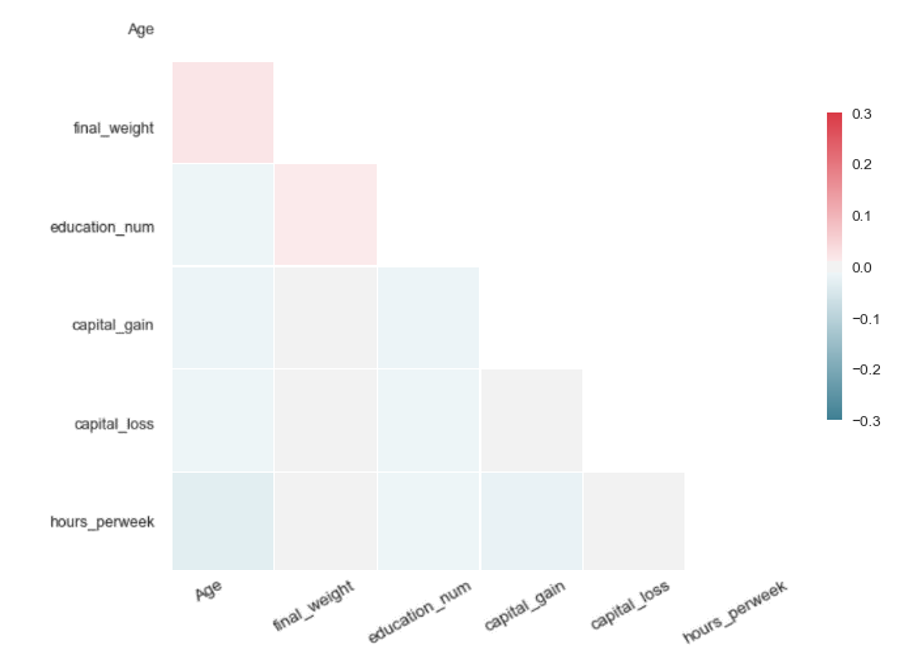

The differential correlation matrix shows very little difference between the original and generated data.

A question of privacy

There is a trade off that needs to be made when generating synthetic data - the need to balance generating data that preserves the utility and representativeness of the original data while ensuring that the original data is not included or could be derived. A key area of interest is privacy - for example, ensuring that an observer using the data cannot tell if a particular individuals data was used in generating the data set. The synthetic data set should be resistant to identification and reconstruction attacks. Ensuring more privacy usually reduces the utility of the dataset. The balance of these is usually dependent on the end use and must be considered carefully before using synthetic data.

Comparison with other techniques

Synthetic data generated by SMOTE was compared with outputs from auto-encoders and GANs. This is covered in detail in the Campus synthetic data full report. In our initial finding we see that SMOTE has the capability to generate data with high utility and representativeness, often on a par or better than other techniques. For applications where utility is more important that privacy then SMOTE can offer a quick and easy method of generating data. SMOTE has the advantage of being quick and easy to implement, needing no specific set up to use other than installing the appropriate library and calculations are considerably faster than some of the learning based approaches.

Future work

The initial focus was on continus data sets. Future work should look at binary and categorised data features, using a wider variety of the SMOTE variations such as SMOTE-NC (SMOTE for Nominal and Continuous features, now implemented in imbalanced-learn) and ROSE.

The topic of privacy is of great interest and a number of wider research projects and workshops are being help to investigate the relationship between utility and privacy in synthetic data generated by a variety of techniques. Various methods could be used to try and improve privacy using SMOTE, including adding a level of noise to the generated data and adjusting the criteria for selecting xi and xzi and constraints placed on the parameter γ. These may provide intesting and useful methods for generating synthetic data sets for a variety of needs.